Next: Edge detection

Up: Methods

Previous: Image Acquisition

Calibration

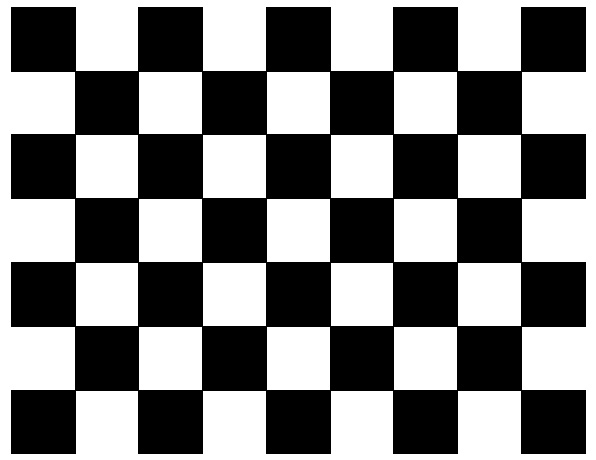

To gain information about the internal and external camera geometry, as well as the geometric relation between both cameras, the algorithm proposed by Zhengyou Zhang, which is described in [Zha00], was used. In order to calibrate the cameras, the calibration routine needs at least two observations of a planar pattern with different orientations. The OpenCV implementation uses a chessboard-pattern, as shown in Figure 3.4.

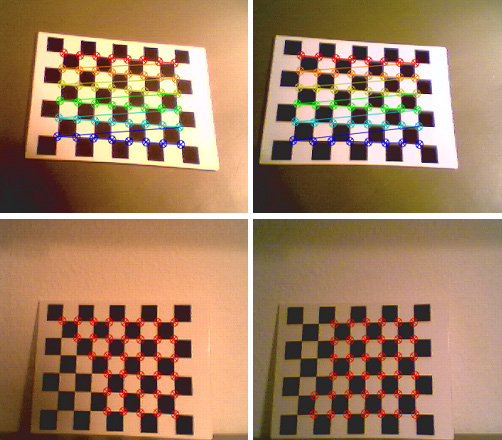

The benefit of this calibration technique is its simplicity, flexibility, robustness, low cost and of course it is already implemented. To calibrate a stereo system using this technique, it is only needed to define the number of internal corners, the size of the squares in mm and the number of views to be taken into account. For example, the calibration pattern shown in Figure 3.4 contains 8 x 6 internal corners and when printed on a A4 paper the squares are 18mm x 18mm. For the calculation, ten different views of the calibration pattern, which has to be visible in both images, e.g. in the left and right view, are used. The first step of the implementation is to locate internal chessboard corners. For example, a simple chessboard has 8 x 8 squares and 7 x 7 internal corners, that is, points, where the squares are tangent. Initially the corners are approximated and if a corner is found, it is highlighted inside the image. The two images at the bottom of Figure 3.5 shows the result of this process.

If all corners of the chessboard pattern have been found, the highlight changes it is color and connects the single corners. The two images at the top of Figure 3.5 depicts this situation. In this stage the algorithm tries to find the corners using subpixel accuracy [Res01]. If the pattern is found in both images, the coordinates of the corner candidates are stored for the succeeding calculation. If enough patterns have been found, the algorithm computes the intrinsic and extrinsic camera parameters. First it starts with an analytical solution which is followed by a non-linear optimization technique based on the maximum likelihood criterion3.4. The resulting calibration file is shown in Table 3.1, where

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

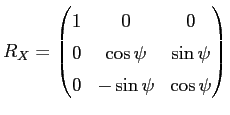

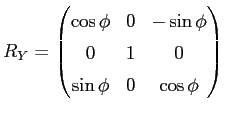

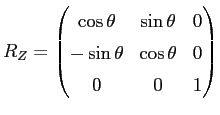

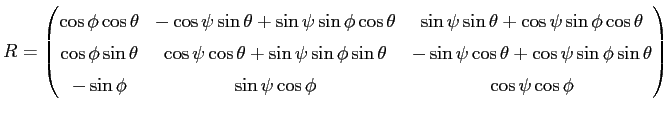

the first two diagonal elements of the camera matrix are the effective focal lengths in x and y direction given in pixels. The third and sixth value are the camera center coordinates, also in pixels. The translation vector is given in mm and describes the translation between the camera and the world coordinate system. The rotation matrix specifies the rotation between the camera and the world coordinate system and is defined as the composition of rotations (roll ![]() , pitch

, pitch ![]() , yaw

, yaw ![]() ) around the X, Y and Z axis.

) around the X, Y and Z axis.

| (3.2) |

| (3.3) |

| (3.4) |

| (3.5) |

After the images are transferred to memory, the result of the calibration is used to rectify the images. The benefit is that corresponding elements can be found on the horizontal scan line. Before the process of rectification, also a point to line correspondence could be established, but the epipolar line has to be taken into consideration. After rectification there is no further need to think about the epipolar geometry.

Next: Edge detection

Up: Methods